WHAT IS THE SITEMAP?

WHAT IS THE SITEMAP?

A sitemap is usually a file created in XML format that contains a list of URLs available within a specific domain. In addition to the list of all subpages in the sitemap, you can also include information about when the specific address was last updated and what priority it has. It should be remembered that giving a high hierarchy for a given address will not make it faster to index. This is only a determination of how important the URL is in the structure of the page.

It should also be remembered that simply posting the sitemap.xml file on the server does not mean that all subpages in our domain will be indexed right away. It takes time for Googlebot to review every URL included in the Sitemap, but you can speed up the process. To do this, you must add the path to the sitemap. xml file on the server in Google Search Console. Thanks to this, we not only partially speed up the operation of the crawlers, but we can also monitor if there are any errors related to the addresses placed in the sitemap. In addition, using Google Search Console gives you the option of updating your Sitemap if we make some changes to it. Every correctly made SEO optimization should always include the appropriate configuration of the sitemap file.

SHOULD BE A CONSTRUCTED SITEMAP?

Google in its guides for web-developers very clearly indicates what are the requirements for the correct configuration of the file with the sitemap.

- One of the most important rules is that all addresses that are in the sitemap are up-to-date. Any 404 errors or different types of redirects will make the site unattractive from Googlebot’s point of view.

- The URLs included in the sitemap should be accurate. If the address of our site is https://www. example. com/, then the sitemap can not be placed as https://example. com/, nor in the case of related addresses as . /page_domain. html.

- A Sitemap can contain up to 50,000 URLs. In turn, its size can not exceed 50MB. In the event that one of these conditions is not met, it is best to create a Sitemap index, i. e. a list indicating the location of all sitemap. xml files within the domain. It is created by dividing the maps into smaller parts. It is worth using a logical structure as in the case of determining the architecture of the page. In this way, separate files should be created for posts, subpages, information subpages, etc. Creating an index with sitemap files is especially recommended when positioning an online store in which there are many categories and products.

- If the site has different language versions also in the sitemap should be included information about the addresses for all translations. To do this, place the URL for each language version in the sitemap file and mark it using the hreflang attribute.

All the detailed information about the page map can be found on the official site sitemaps. org, which is a collection of all rules related to the correct creation of sitemap files.

HOW DOES THE SITEMAP AFFECT INDEXATION?

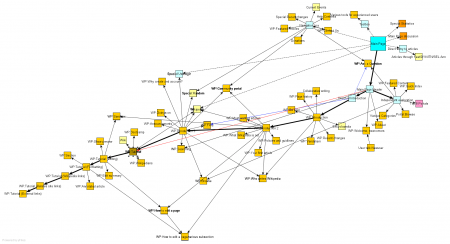

A correctly optimized sitemap file has a direct impact on the crawl budget in our domain. The way it affects updating the sitemap with all relevant URLs to indexing statistics can be illustrated by an example of one of our clients. At the end of February, the Sitemap file was updated. The chart below shows the first big jump in the number of pages indexed daily at the turn of February and March. It was caused by re-sending the revised version of the sitemap to Google Search Console.

The increase in indexing statistics in this example is only caused by the optimization of the Sitemap file.

Another increase in the indexing index is the effect of a correctly constructed map, on which Googlebot can efficiently navigate. It is also worth noting the decrease in the time spent downloading the site. This means that the crawler crawls the entire site much faster. In addition, the sitemap file for this client is dynamic, i. e. when adding or removing a URL on the page it is automatically updated. A sitemap file constructed in such a way allows you to be sure that in the case of subsequent structure modifications, indexing robots will analyze all introduced changes faster.

Picture Credit: wikipedia